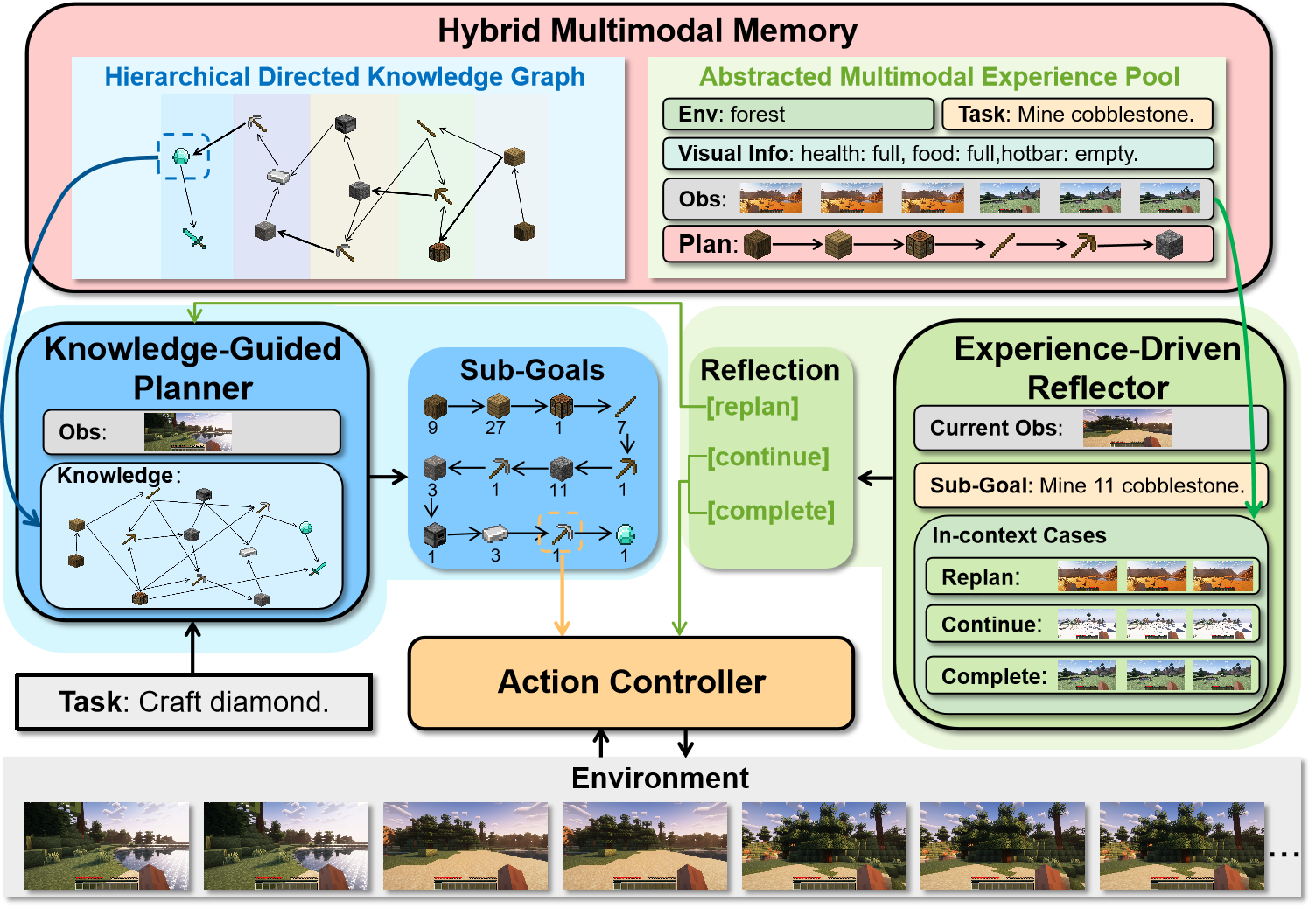

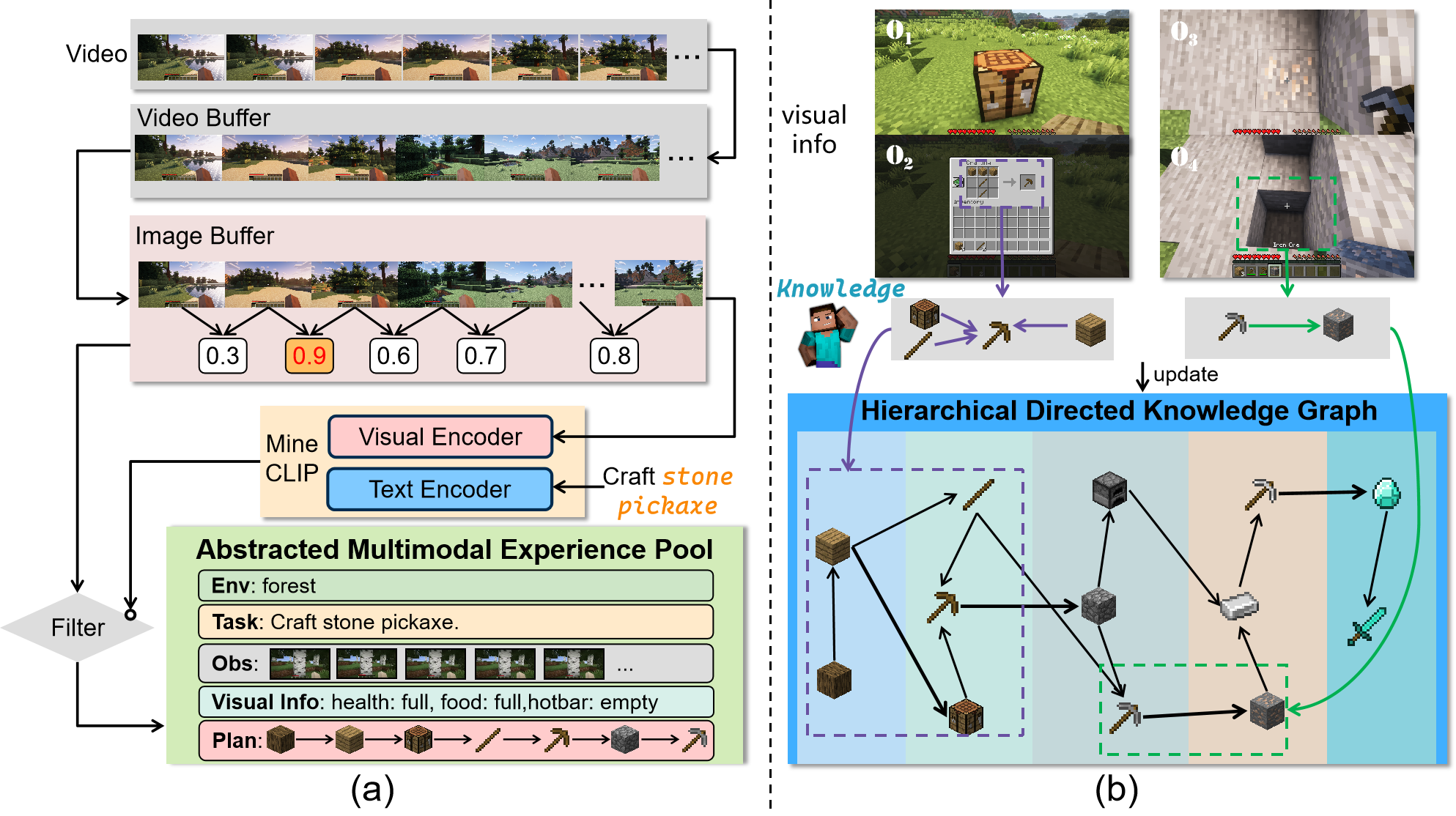

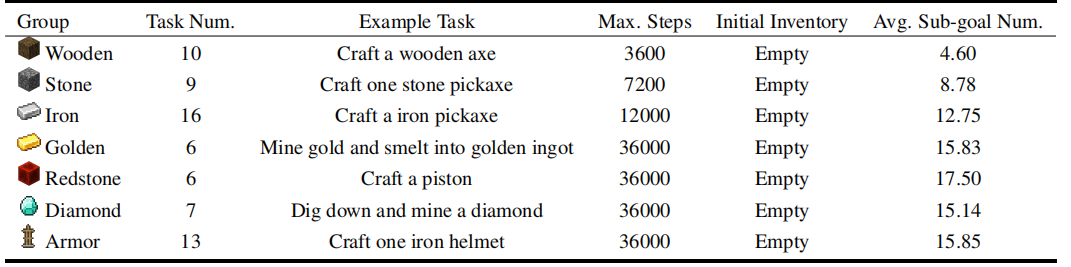

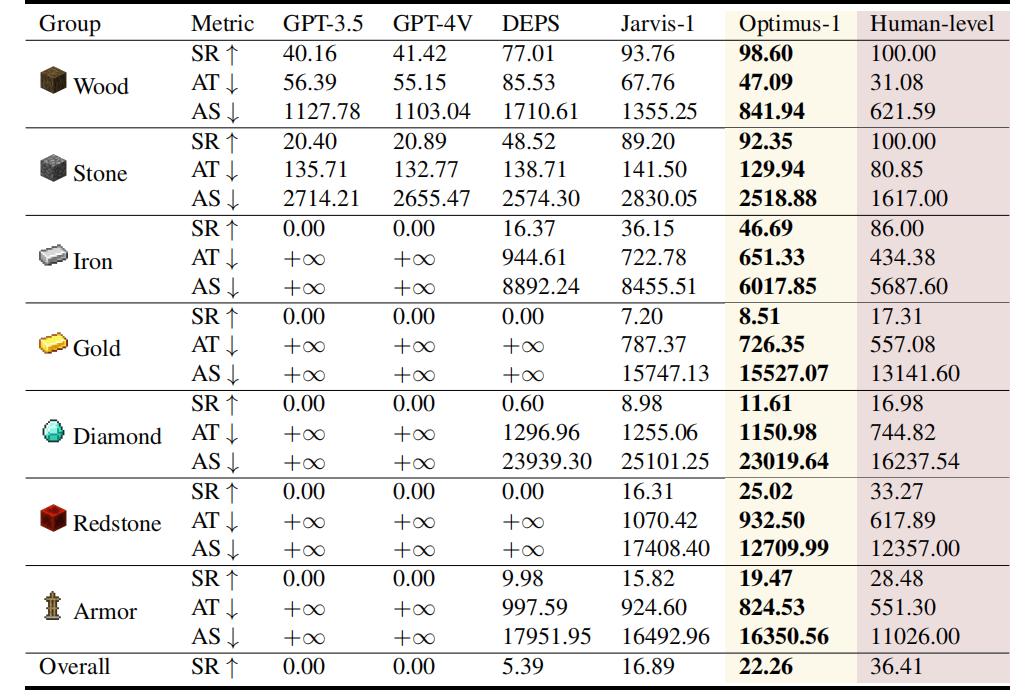

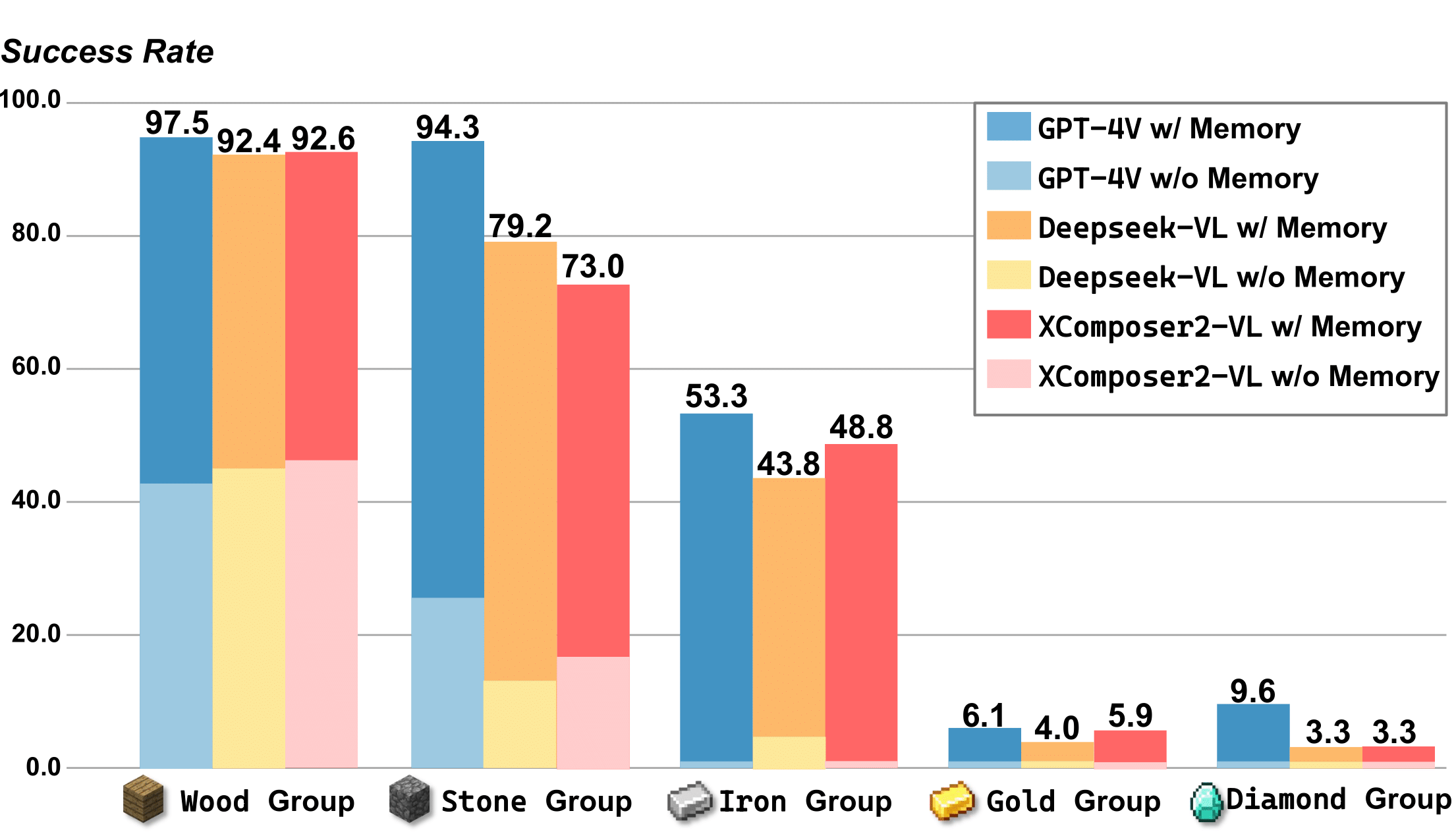

Building a general-purpose agent is a long-standing vision in the field of artificial intelligence. Existing agents have made remarkable progress in many domains, yet they still struggle to complete long-horizon tasks in an open world. We attribute this to the lack of necessary world knowledge and experience that can guide agent through a variety of long-horizon tasks. In this paper, we propose a Hybrid Multimodal Memory module to address above challenges. It 1) transforms knowledge into Hierarchical Directed Knowledge Graph that allow agents to explicitly represent and learn world knowledge, and 2) summarises historical information into Abstracted Multimodal Experience Pool that provide agents with rich references for in-context learning. On top of the Hybrid Multimodal Memory module, a multimodal multimodular agent, Optimus-1, is constructed with dedicated Knowledge-guided Planner and Experience-Driven Reflector in Minecraft, contributing to a better planning and reflection in the face of long-horizon tasks. Extensive experimental results show that Optimus-1 significantly outperforms all existing agents on challenging long-horizon tasks benchmark, and exhibits near human-level performance on many tasks. In addition, we introduce various Multimodal Large Language Models (MLLM) as the backbone of Optimus-1, and the experimental results show that Optimus-1 exhibit strong generalisation with the help of Hybrid Multimodal Memory module, outperforming the GPT-4V baseline on many tasks. The extensive experimental results show that Optimus-1 makes a major step towards a general agent with a human-like level of performance.

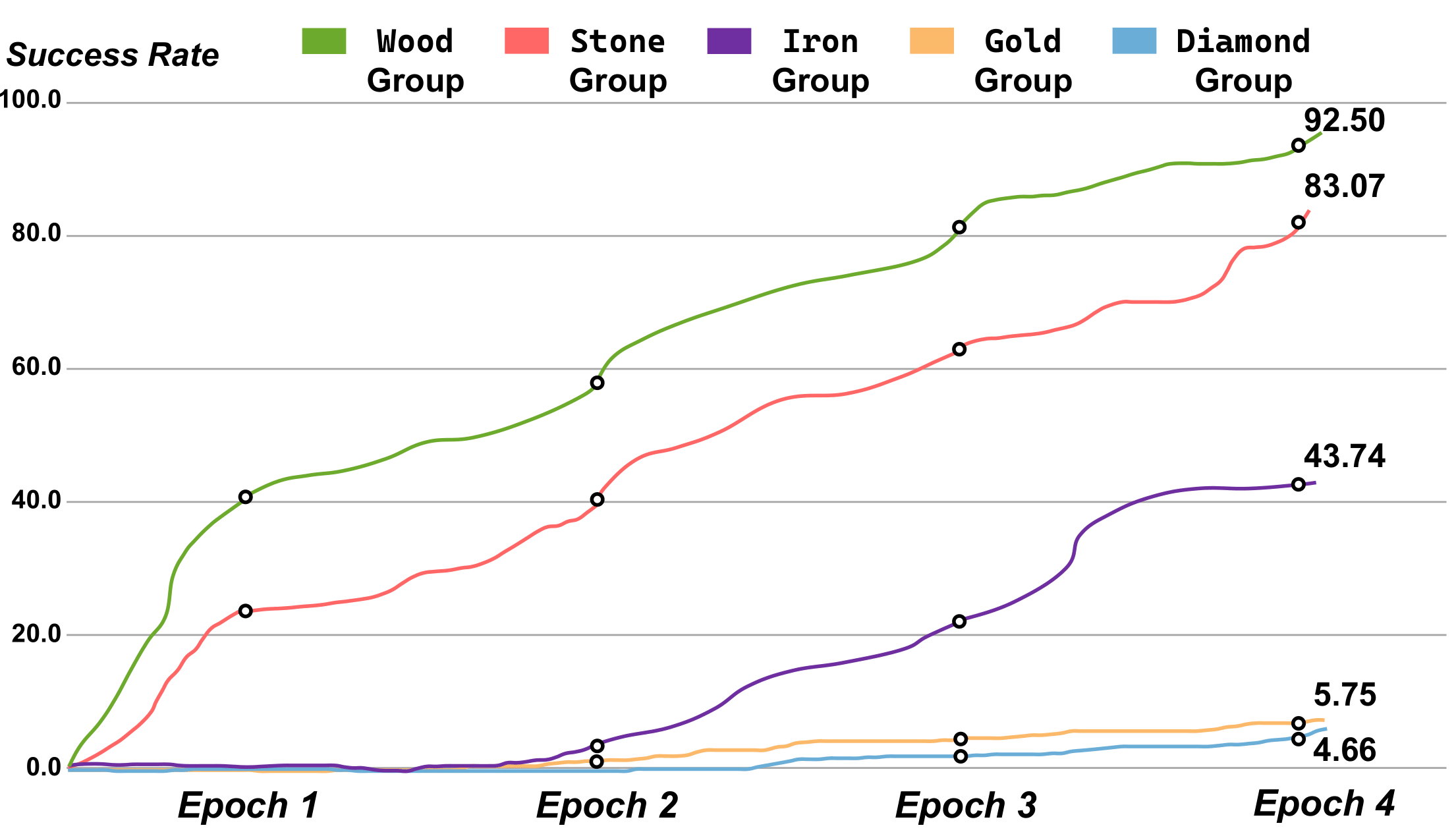

Wooden Group

Wooden Group Stone Group

Stone Group Iron Group

Iron Group Golden Group

Golden Group Diamond Group

Diamond Group Armor Group

Armor Group Redstone Group

Redstone Group

In this paper, we propose Hybrid Multimodal Memory module, which is inspired by the major influence of the human long-term memory system on the completion of long-horizon tasks. Hybrid Multimodal Memory module consists of two parts: HDKG and AMEP. HDKG provides the necessary world knowledge for the planning phase of the agent, and AMEP provides the refined historical experience for the reflection phase of the agent. On top of the Hybrid Multimodal Memory, we construct the multimodal and multimodular agent Optimus-1 in Minecraft. Extensive experimental results show that Optimus-1 outperforms all existing agents on long-horizon tasks. Furthermore, we validate that general-purpose MLLM, based on our proposed Hybrid Multimodal Memory and without additional parameter updates, can exceed the powerful GPT-4V baseline. This self-evolution approach provides novel insights and directions for the study of general-purpose agents.

@inproceedings{li2024optimus,

title={Optimus-1: Hybrid Multimodal Memory Empowered Agents Excel in Long-Horizon Tasks},

author={Li, Zaijing and Xie, Yuquan and Shao, Rui and Chen, Gongwei and Jiang, Dongmei and Nie, Liqiang},

booktitle={NeurIPS},

year={2024}

}